I already ranted a bit about how the usual mathematical notation serves to obscure rather than reveal the operation of neural networks with gradient descent. So, this post, I’m going to explain unambiguously how to code neural networks, without throwing people into bewildering notation.

Gradient Descent

Imagine a hiker, heading downhill. He can take steps that change his position in two dimensions – east/west or north/south – seeking to reach the minimum altitude possible. Gradient descent is a lot like that. You’re adjusting your network in many dimensions – each weight can be considered as a different direction – seeking to reach the minimum error possible. So how do we do that?

I’m going to start by saying what the three operations in training a network by backpropagation are, then explain how to do each one.

Forward-chaining is how you use a neural network to solve problems. You load a problem into the network at the inputs, do your forward-chaining, and you read the solution out of the network at the outputs. When you’re loading a problem into the network, you should map the input values to a range between -1 and 1. When interpreting the outputs, you should not require the network to produce outputs far outside that range. There other ways to do things, but following these rules is the simplest way to get good results without doing anything that would need more explanation.

Back-propagation is how you decide what corrections your network needs. Every time you have done forward-chaining, if you know what answer the network should have produced, you have a chance to do back-propagation. Backpropagation means figuring out what direction leads downhill. The bigger the batch of examples you’ve done backpropagation for, the more clearly you can see which direction is downhill. With any one example, you may see a different ‘downhill’ direction. Batches are a way of averaging them out, trying to find which way is downhill for most examples. To do backpropagation you calculate how far off (and in what ways) the network’s answer is from the right answer, load corrections into the network at the outputs, then use those to figure out the corrections for the rest of the network. This results in information getting stored in the neural network that says how the network needs to change in order to bring its next answer closer to the right answer.

Training is actually taking the step downhill, or using that stored information to apply corrections to the network.

Now we can talk about the network itself. The network has two kinds of things in it, which I’m going to call nodes and connections. You can call them neurons and synapses if you like, although biological neurons and synapses are a lot more complicated.

The nodes store three numbers which I’m calling input, output, and feedback. Each connection connects an earlier node (closer to inputs) to a later node (closer to outputs) and stores two numbers which I’ll call weight and correction.

Forward-chaining and backpropagation are pretty much the same in all neural networks, but the training operation changes depending on training strategy. There are a bunch of different strategies for training the network weights, mostly trying (and mostly succeeding) in getting faster results. But the steepest-gradient-descent method is the starting point, because it’s the classic mathematically-justified method that nearly all others are based on. So that’s the one I’m going to discuss in this article.

Activation and Derivative

Before I can explain how steepest gradient descent operations work, I have to start with two functions named Activation and Derivative. Roughly speaking, activation maps a node’s input to its output and derivative says what the ratio is at that point between changes in input and changes in output.

This is how the system decides which direction it needs to go to reach a lower error, (ie, a value closer to the “right answer”) and by comparing the derivatives in all parameters it can see which combination of changes is the “steepest gradient” toward lower error. Steepest gradient descent means always going downhill in the direction of the steepest grade. Eventually you’ll reach the bottom of a valley. Hopefully the deepest valley, or at least one nearly as deep as the deepest.

In neural networks it’s not quite that simple. You have nonuniform data, and each individual example presents a different error map. In order to see the “simple” or overall fitness landscape that represents the error gradient for all the data, you have to get information from every single example case to make each step.

And that gets us back to Activation and Derivative. At every location in the map, the “error” that a given example shows is measured in terms of how far we missed each output that we wanted a particular value for. Each weight we adjust has us moving one step in one direction in that many-dimensional map. And we can use Activation and Derivative to work backward from the outputs and figure out whether and how much any such step will reduce or increase the error. These functions are how we figure out which direction is downhill in terms of the error, and how steeply downhill that step is, with respect to every different direction we can move.

There are a bunch of different Activation functions you can use. Each has a corresponding Derivative function.

Four popular sigmoid activation functions are

- tanh(x),

- 2 * Sigmoid(x) – 1, (where Sigmoid(x) is 1/(e-x + 1))

- arctan(x),and

- x/(1+abs(x)).

The corresponding deriv functions, respectively, are:

- 1 – sqr(tanh(x)),

- 2 * (Sigmoid(x) – sqr(Sigmoid(x))),

- 1/(1+sqr(x)), and

- 1/sqr(1 + abs(x)).

The first couple are especially popular because their corresponding derivative functions can be calculated simply in terms of their output. I happen to like the last one because it behaves better in deep networks. The Sigmoid, when used as a proper noun, means 1/(1+e-x), and that’s what you use in the activation and derivative functions given as number 2 in the above lists.

Mathematically speaking, if a function has a constant upper asymptote and a constant lower asymptote, and its derivative has the same sign from negative infinity to positive infinity, and it’s continuous and differentiable at every point then it’s a sigmoid function. We’ve been relying on sigmoids of one sort or another since the 1970s because they seemed like the right idea mathematically.

But people have been talking about sigmoid functions in neural networks, and this has been unfortunate for two reasons. First, more often than not people hearing them thought they meant THE Sigmoid. There is an important difference between THE Sigmoid, and the sigmoid equation that is best for neural networks, which I give as number 2 above. The difference is that using the one I give above, the output is centered on zero.

The canonical sigmoid is utterly wrong when used as an activation function in a deep networks, for two reasons. The first, and less important, is because of its extremely flat tails on both ends. This means huge areas of the landscape are flat, and the downhill grade can be so slight that the hiker can’t find it. The second, and far more important, is that because it’s not zero-centered, it is deceptive. The hiker starts very far from where the valley is likely to be, and In deep networks the hiker will go a very long way, very slowly, along a very slight slope, and completely miss the main valley.

Sigmoids are usually presented as though they are interchangeable. They are not. In fact THE sigmoid, as we’ve discussed, is intractable in training any neural network of more than two hidden layers. Don’t use it, except on toy problems. The optimized sigmoid is viable on networks of six hidden layers but its flat tails still make for broad flat areas that can show no gradient in places where otherwise that gradient would prevent you from getting stuck in some local minimum (a shallow depression where it’s uphill in all directions but it’s not the deep valley floor you were after). Tanh, given as number 1, and arctan, given as number 3, are more reliable in networks of up to ten hidden layers. My favorite sigmoid, which I gave as number 4, is called the ‘softsign’ function. It’s reliable in networks of up to fifteen hidden layers, and usable to some extent in training networks even deeper than that. But it presents shallower gradients, so it can be slower.

The Ramp Function

But it turns out that sigmoids aren’t necessarily the right things to use in the first place. They can be better when you want to do function approximation, very closely matching smooth curves. But for most problems, especially in large or deep networks, it turns out that the Ramp Function is better. The Ramp Function isn’t a sigmoid for two reasons; first it’s not differentiable at zero. Second, it doesn’t have an upper asymptote. And it turns out that if you use the Ramp Function instead of a sigmoid, you may need a few more nodes per layer but neural networks of most kinds work just as well and they train a lot more easily. In very large and complicated networks, they work better. Better yet, the Ramp function is one or two one-cycle machine instructions depending on your architecture, and its derivative is a single one-cycle machine instruction. It doesn’t get more efficient than that. The ramp function of X is X or zero, whichever is more. Its derivative is one if X is greater than zero and zero if the input is less than zero. How trivial is that?

so the Ramp Activation Function which isn’t a sigmoid because it’s got no upper asymptote and isn’t differentiable at zero, is

(X > 0) ? X : 0

and its corresponding “Derivative”, which isn’t really a derivative because it gives a definite result at zero where the actual derivative is not defined, is

(X > 0) ? 1 : 0

And those are what I have to recommend to anyone who wants to do something deeper than three hidden layers now, and I just shake my head at all the time I spent using sigmoids in big networks. The ramp function’s range isn’t centered on zero, but we don’t need a corrective subtraction, because getting a zero output from the ramp function does not require an input of negative infinity. In fact if you ask for a zero output, it just requires an input of zero or less. So your hiker won’t go wandering off toward negative infinity in the lower-layer directions and miss the valley completely.

Because they have both an upper and lower asymptote, sigmoids present broad flat areas where gradients are hard to find on both sides of their linear range. That complicates training because training has to balance between the top flat area and the bottom flat area, making it harder to get all the parameters right. Sigmoids also impose scale relative to the size of their output range, which complicate coding problems for input and output – whereas the ramp function, being limited only at its lower edge, is self-scaling.

How to do the three operations.

Forward-chaining

All nodes’ inputs are set to zero before a forward-chaining operation starts. During forward-chaining, each node gets forward signals from all the connections that come to it from earlier nodes, and each such signal is added to that node’s input. When all forward signals have been collected, the node’s output gets set to sigmoid(input). The output is then sent as a forward signal to all the connections that go from that node to later nodes.

During forward-chaining each connection gets one forward signal from its earlier node. It multiplies that forward signal by its own weight and sends the result as a forward signal to its later node.

The only other thing you need to know about forward-chaining is how to handle the input and output nodes. Input nodes get exactly one forward signal, and that’s you loading the problem into them. And with output nodes you usually skip the sigmoid; once all the input is added up, you just take that and that’s your output.

Backpropagation

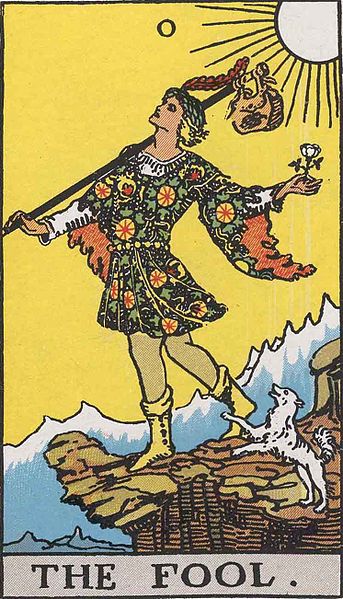

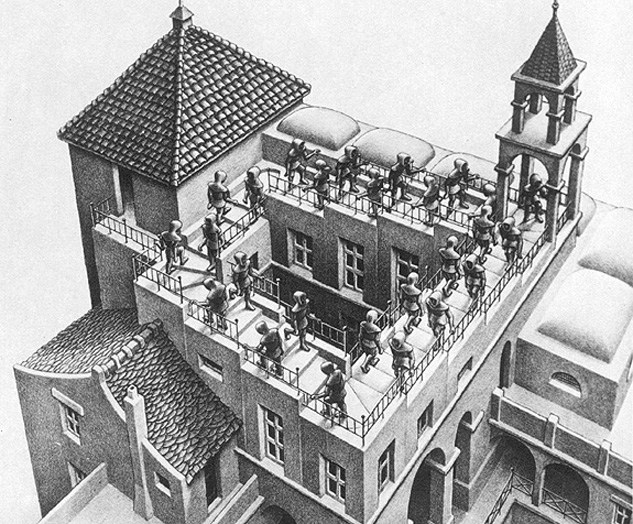

First, backpropagation works best if you either use all your examples in every batch, or select examples from your training data in a random sequence on each pass through the data. Gradient descent is like a hiker headed downhill seeking the valley floor, but each batch of examples has a different ‘downhill’ direction. If you take the batches in the same order each time….. you can get this result.

All nodes’ feedback is set to zero before each backpropagation operation starts. (in fact with a clever implementation you don’t even need to store separate feedback values for the nodes. It’s just easier to explain with them).

During backpropagation each node gets backward signals from all connections that connect it to later nodes. All these signals are added up and the sum is the node’s feedback for that example. Then the node’s feedback is multiplied by deriv(input) and that value is sent as a backward signal to each connection that connects from an earlier node to the node.

During backpropagation each connection gets one backward signal from the later node it connects to, multiplies that signal by its weight, and transmits the result as a backward signal to the earlier node. Then it multiplies the signal it got from its destination by the input value of its source, and adds the product to its own corrections value.

Backpropagation stops at the input nodes because they have no connections to earlier nodes.

The only other thing you need to know about backpropagation is how to handle the output nodes. They get exactly one backward signal, and that’s you setting their feedback for the difference between their current output and their desired output. But how do you figure out what feedback to set?

I’ll explain why this is true in another article, but for now here is the empirical method. Subtract what you wanted from what you got to get the difference. Square the difference. If what you got was less than what you wanted, the learning rate times half the square is your feedback for that output node for the current example. If what you got was more than what you wanted, then zero minus the learning rate, times one-half the square, is your feedback for that output node for the current example.

You can use the learning rate divided by the number of examples that the training is applying instead, if you like; it’s the same as having a smaller learning rate and convenient so that you don’t have to change the learning rate if you change the number of examples per batch.

Training

Training is simple. For every connection, take the corrections value and add it to that connection’s weight. Then set the corrections value to zero.

And that’s it.

That is the whole algorithm for classical steepest-gradient-descent neural networks. It is simple and easy to implement, and it’s a damn crime to hide it behind opaque, hard-to-understand equations. If you use a learning rate that’s small enough that the weights don’t change by more than a very small fraction of the range each time, this will work.

A bias node will help your network learn. No matter what the problem you load into the rest of the network is, load the bias node with the value one, every time. You don’t actually need to represent it as a node, because you can just write code to handle things as if it existed. But you need all those connections with weights and corrections values, so leaving out the node doesn’t actually save you much and leaving it in can be simpler.

Initialization

There is technically a fourth operation, which is initializing the network. It’s usually dismissed with a sentence or two in most works on neural networks because, you know, it’s unimportant. It’s not how the really interesting learning stuff happens, so it’s not what the people are usually there to write about.

In fact, it’s so unimportant than unless you do it right, the really interesting learning stuff won’t work at all. So, hey, nothing we need to bother talking about, right?

Lots of books recommend using small random values, but most of them don’t say how small or what distribution of random. And getting it wrong is a disaster. Putting a small random value in literally every weight really only works if you’re solving very small problems, because when you add a whole bunch of little weights, the law of large numbers says you get closer and closer to “average”, no matter what your input is. That approach in a larger network will result in starting the hiker in another broad flat area where it’s hard to justify taking any step at all. If nodes have more than a dozen incoming connections, It’s best to set only as many weights as it takes for each node to get signals on about a dozen of its incoming connections.

There are several widely used heuristics for initialization and the only widespread point of agreement is that all of them are crap. Agreement on which one is least crappy is nowhere near as widespread.

Here are three of the heuristics for initialization that are least bad, in my opinion and experience. They are all based on Glorot/Bengio, or “Xavier” initialization.

Glorot and Bengio proposed a heuristic of initializing all weights with a uniform value drawn from the range +/- sqrt(6)/(sqrt(i + j) * deriv(0)) where i and j are the number of output points in the previous layer of nodes and the number of input nodes in the subsequent layer of nodes. For example if you have a layer of 20 nodes whose output is being used as inputs for a layer of 30 nodes, then i + j is 50, and your derivative at zero is 1, then you’d initialize all the connections with weights in the range -sqrt(6)/sqrt(50) to +sqrt(6)/sqrt(50). This works better other known initialization schemes for sigmoids, if you plan to write a weight into literally every connection.

But you don’t have to write a weight into every connection, and if you do, the law of large numbers isn’t your friend on deep networks, because it means that averages where you measure the error will get closer and closer to zero, and your training will have less to distinguish them by. To do Modified Glorot-Bengio, use regular Glorot/Bengio on the connections from any layer with twelve nodes or fewer. On connections from larger layers, only initialize enough weights that every node on the destination layer has twelve incoming connections and every node on source layer has the same number of outputs, and set j = 12 in the above equation.

When you’re done initializing, depending on the topology of your network, most of the weights may still be zero, and that’s okay. Modified Glorot-Bengio usually gets a faster start in training, especially on large or deep networks. Nobody knows whether the solutions these networks eventually find are of a different quality than unmodified Glorot-Bengio. There has been debate on the topic but so far there is neither any known reason to believe that they are better, nor any known reason to believe that they are worse.

I have found a third heuristic that seems to give reasonably good results in general neural networks (where there isn’t any particular concept of layers) which are deep (more than four or five connections traversed on most paths from input to output) or recurrent networks that are wide (more than four or five connections on most paths along repeating cycles). Use modified Glorot/Bengio initialization (initialize no more than 12 incoming connections at each node) with the number of incoming connections initialized as j and the mean number of outgoing connections of their source nodes as i. The best that can be said for this is that it seems to work most of the time, although there is no convincing theoretical justification for it.

Momentum

With momentum, your hiker has traded in his boots and walking stick for a gravity kart. It can get to the bottom faster, but it may crash if you have to make tight corners on the way, and you may not be able to stop if you’re moving too fast when you get there.

The momentum technique is a popular and well-founded variation from the steepest-gradient descent algorithm I’ve described above. Momentum is worthwhile, for several really good reasons. Also, it’s incredibly easy to implement. You change one line in your training routine. Instead of setting the corrections values in the connections to zero after using them, you multiply the corrections value by some number (called the ‘momentum constant’) between one and zero. Like the learning rate, there’s no really perfect method for picking its value; the best value varies from problem to problem. If you feedforward and backpropagate all examples between each time you train, there’s no really compelling reason why you should be using momentum at all. If you train after every example, and you have a lot of examples, then a momentum constant of 0.99, or maybe even more (with a correspondingly tiny learning rate) is not inappropriate.

Momentum has several important benefits. The simplest is that steepest gradient descent is subject to very slow progress along shallow gradients far from a good solution. Momentum can make that go a lot faster, because when you’re on a shallow gradient far from a good solution, most examples will tend to pull that weight in the same direction and therefore momentum will gradually pick up speed. Of course, using a Ramp function for activation will make that go faster too, because it immediately eliminates half of all the no-gradient fields just by having one side without an asymptote.

But there’s another benefit that’s a lot more important, which most people never realize is among the reasons their steepest-gradient-descent networks without momentum (when trained one example at a time) are really really slow. Steepest gradient descent can wind up going VERY slow if it is proceeding ‘downhill’ toward a good solution in a ‘diagonal groove’ that requires two or more weights to change at the same time because if one of them (or not enough of them) change without the others changing, it makes the solution worse. Momentum can straighten out these paths and make the different weights change together, even when individual examples only ‘pull’ in one direction. This matters a lot less if you backpropagate on more examples (bigger “batches”) between each time you train – but that means each training step takes longer, so it trades one specific kind of slowness that happens with respect to some weights, for a less extreme but more general slowness that happens with respect to all of them.

Momentum makes a different tradeoff; you escape both the specific and general slowness, and you can train after each backpropagation, but your steps are less accurate, because to some extent they’re directed by what the slopes were in examples that you saw ten or fifty steps back along your path. You may still be going ‘east’ (changing one weight) because you just came down a hill with a steep west-to-east slope, even if the gradient where you are now is more north-to-south and steepest gradient descent would mean changing a different weight. But you just haven’t been able to make that turn yet because you were going too fast.

Momentum also has a couple of other drawbacks. For example when you reach the valley floor you can’t stop immediately. If you have too much momentum, it can take you right past the best solution and up over another ridge to a place where ‘downhill’ leads in a different direction to a local minimum that’s not as good. You shouldn’t apply momentum to the connections that lead to the Bias Node; it makes learning noticeably more random and unreliable if you do. And finally momentum is absolutely poisonous to backpropagation in recurrent networks. If you’re doing anything with a whiff of recurrency, batch training will work and momentum absolutely won’t. But how to do backpropagation in recurrent networks (backpropagation through time) is beyond the scope of this article.

Anyway. That’s the simple algorithm behind steepest-gradient-descent and steepest-gradient-descent with momentum, including batch training and a couple of network-initialization procedures that work for large networks.

Next time I write about neural networks, I may explain the math behind why this algorithm works, and maybe even write a short Mathematician-to-English glossary for people who want to understand what the heck they’re talking about.

By the way, feedback is very much welcomed on this article. If it helped you, or if I got something wrong, PLEASE write feedback and let me know. I tried to write the article I wish someone had written for me back when I was first getting into coding neural networks; I’d love to know if I helped anyone, and I’d hate to have it be up and also wrong.